The Research Behind Spradley

How conversational AI bridges the gap between qualitative depth and organizational scale — and what the peer-reviewed evidence says about its validity.

Magnus Størum

CEO - Spradley

Featured

The constraint that shaped an industry

Understanding people at any meaningful depth has always required conversation. Not checkboxes. Not scales from one to five. Conversation — the kind where you follow a thread, ask why, sit with a pause, and let someone arrive at the thing they didn't plan to say.

The problem is that conversations don't scale. A single qualitative interview takes an hour. Transcription, coding, analysis, synthesis — several more. Multiply that across a few hundred employees, and you're looking at months of work by trained researchers or consultants.

So a pragmatic choice was made: trade depth for breadth. Build feedback systems around surveys — standardized, efficient, deployable to thousands in a single afternoon. Those systems have been enormously useful. They have also been, by design, incapable of capturing why. Why the engagement score dropped. Why the team that looks fine on paper is quietly falling apart. Why someone who seemed committed last quarter is now interviewing elsewhere.

This wasn't a failure of imagination. It was a constraint of physics. You could have depth or scale. Never both. That constraint shaped an entire industry.

It doesn't hold anymore.

What understanding actually requires

Here's the difference the old constraint made invisible.

A survey tells you a team's morale is low. A real conversation tells you that a reorganization six months ago broke the informal mentoring network that had held a department together for years — that the three people who always knew the answers to hard questions all ended up in different departments, and nobody replaced what they'd been doing because it was never in anyone's job description. The people who stayed feel abandoned by the people who left. Nobody has said any of this out loud because the company narrative is that the reorg was a success.

The number might be the same. The understanding is entirely different.

The anthropologist Clifford Geertz called this thick description — understanding that captures not just what happened, but what it means to the people living it. Working within the same intellectual tradition, James P. Spradley developed this idea into a practical interview method. Published in 1979, The Ethnographic Interview became one of the foundational texts of qualitative social science. Spradley's method didn't start with hypotheses or impose categories. It followed the respondent's own language, their own meaning-making. The interviewer's job wasn't to extract answers. It was to learn the world as the other person sees it.

For decades, this kind of insight was considered the gold standard of organizational understanding. It was also considered inherently unscalable. The depth that made it powerful was the very thing that made it expensive.

We named our company after James Spradley because we believe his method was right. We also want to be precise. We are not doing ethnography. Ethnography requires prolonged engagement, the researcher's own presence as an instrument of inquiry, and a relationship between observer and observed that unfolds over time. What we're building is something new — a method inspired by the ethnographic tradition, made possible by AI, and designed for a context Spradley never imagined: understanding entire organizations at once.

We think being honest about that distinction makes the work more credible, not less.

What the evidence says

An AI interview is not a chatbot running through a script. The distance between "AI that asks questions" and "AI that knows how to listen" is enormous — and that distance is what separates data collection from understanding.

Done well, an AI interviewer uses non-directive questioning, adaptive follow-up, and cognitive empathy to conduct conversations that hold up against trained human researchers. The scientific foundation for this is now peer-reviewed and published in one of the world's leading academic journals.

Why people say more to AI

This pattern — lower perceived rapport, equivalent or higher disclosure — keeps appearing across studies. Social desirability bias, the well-documented tendency for people to answer in ways they believe will be viewed favorably, has been shown to shift survey responses by 10–20% on sensitive topics. The effect intensifies whenever a human is present, whether in person or on the phone.

In traditional employee listening, the presence of a human — whether a consultant, an HR partner, or a manager — introduces a social dynamic that shapes what people are willing to say. Not because the listener is untrustworthy. Because people naturally manage impressions around other people. They calibrate. They hedge. They leave out the thing that feels too risky to say aloud.

Anthropic's research team, reflecting on the candor in their 80,508 interviews, observed that respondents appeared to find in AI a freedom from judgment they hadn't experienced before. In their analysis: 69% of professionals mentioned social stigma around AI use at work — suggesting that workplace conversations about technology itself carry impression-management pressures that AI-led formats can sidestep.

A well-designed AI conversation doesn't eliminate social desirability entirely. But the evidence suggests it lowers the threshold considerably — especially on the topics that matter most: the ones people have been thinking about but haven't found the right moment, or the right audience, to say.

From conversations to insight

Collecting conversations is the first half. The second — and the one most AI tools skip entirely — is making sense of what was said.

A hundred honest conversations are worth nothing if nobody reads them. And no leadership team has time to read a hundred transcripts. The gap between collecting qualitative data and turning it into something people can act on is where most listening programs stall — not because the data wasn't there, but because nobody could process it at scale without losing the meaning.

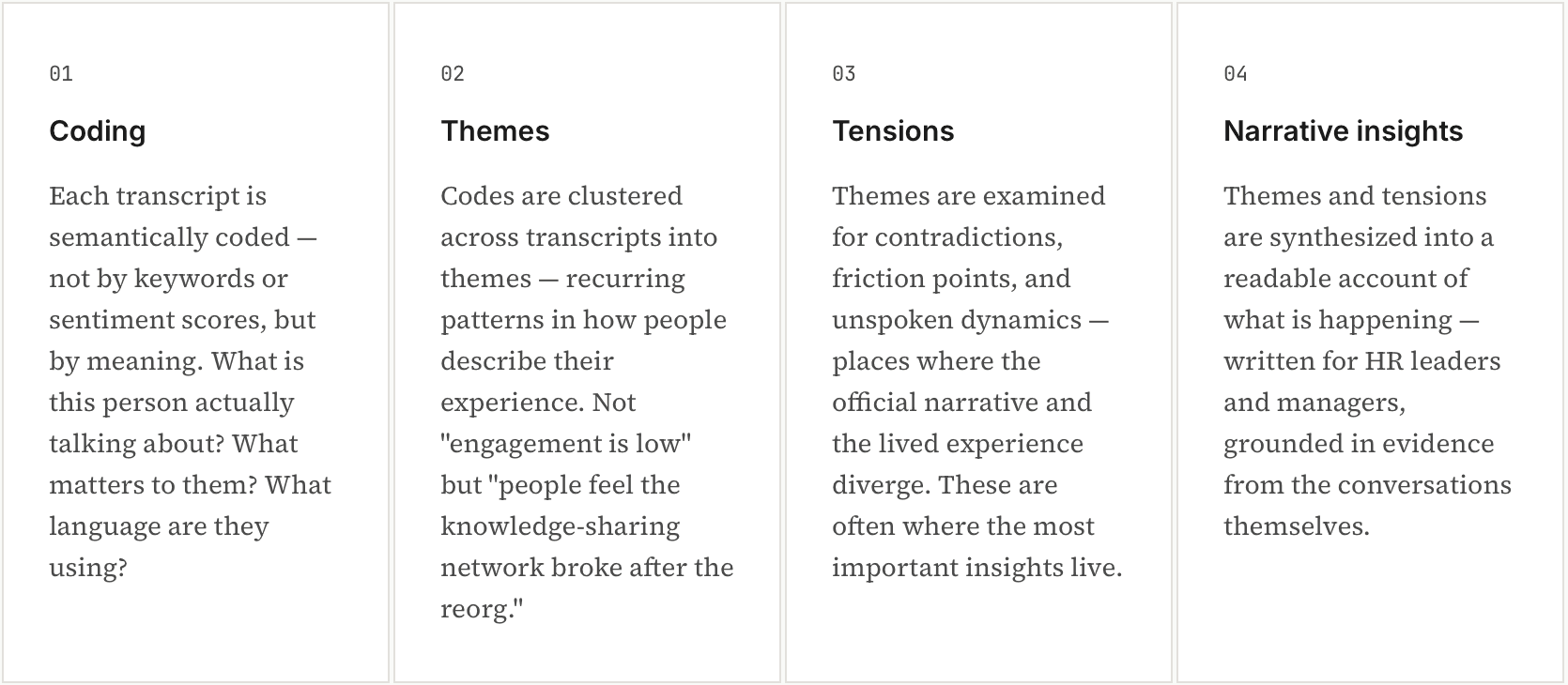

This is where Spradley's analysis engine comes in. After every conversation, the transcripts move through a structured analytical process designed to preserve the richness of what people said while making it legible to the people who need to act on it.

The output isn't a score. It isn't a dashboard. It's a structured account of what your people are actually experiencing — in their own language, organized into patterns visible across teams, departments, or the whole organization.

What Spradley does not do is tell you what to do about it. That's deliberate.

The HR leader who reads that three teams lost their informal knowledge-sharing network after a reorganization knows her organization better than any tool does. She knows which manager to talk to, which team needs attention first, which intervention fits the culture. What she lacked wasn't judgment — it was the information that would let her use it. Spradley provides the material. The interpretation, the prioritization, and the action remain human.

The shift

This isn't a technology story. It's about a question people leaders have carried for years, mostly quietly: do we actually understand what's happening here?

The engagement score says 7.1, up from 6.8. That's progress. But the leader presenting that number knows things it doesn't capture. Three strong people in product have gone quiet. The new ops manager hits his targets, but his team hasn't raised a single concern in months — which isn't reassuring. The post-merger integration everyone calls a success produced a layer of polite compliance that shows up nowhere in the data.

She knows all of this the way attentive leaders do — through hallway conversations, offhand remarks, the tone of voice when someone says "everything's fine." Small signals that never make it into a report.

The problem was never that this knowledge didn't exist. It was that it couldn't be gathered systematically across an entire organization. It lived in the gap between what people say on a five-point scale and what they say when someone actually listens.

Clifford Geertz called this the difference between thin and thick description. A thin description gives you the score. A thick one tells you what it means to the people living it.

For fifty years, there was no way to reach that gap at scale. The tools to do it — from everyone, at once — now exist. And the research backing them is peer-reviewed in one of the world's top academic journals, validated at the largest scale qualitative research has ever seen, and consistent across every domain in which it has been tested.

What remains is the choice to listen differently.